Montage Video Maker: Create Viral Shorts From Long Videos

Other

You already have more short-form content than you think.

If you publish YouTube videos, podcasts, webinars, interviews, demos, or training sessions, your problem usually isn’t a lack of ideas. It’s that your best ideas are buried inside long recordings that nobody on your team has time to cut into shorts.

That’s where a montage video maker changes from a nice editing utility into a content system. Used well, it doesn’t just help you clip highlights. It helps you turn one finished asset into a repeatable publishing engine for TikTok, Reels, and Shorts, without rebuilding your workflow around manual editing.

The key is to treat AI as a decision assistant, not a substitute for editorial judgment. Let it surface candidates, trim the busywork, handle reframing and captions, and speed up exports. Then use your judgment where it matters most: choosing the right source material, picking the strongest hook, tightening pacing, and aligning every clip with platform intent.

The Hidden Gold in Your Long-Form Content

Most creators make one mistake with long-form video. They treat the publish button as the finish line.

It isn’t. It’s the start of the second half of the workflow.

A strong podcast episode or YouTube video usually contains multiple standalone moments: a sharp opinion, a concise explanation, a surprising mistake, a useful framework, a clean before-and-after, or a line that makes someone stop scrolling. Those moments are already paid for. You already spent the time scripting, recording, reviewing, and publishing them. What’s missing is a fast way to extract them.

That’s why AI-powered montage tools matter. They close the gap between “I know there are good clips in here” and “I have five publish-ready shorts by this afternoon.” If you’re working from interviews, webinars, tutorials, or talking-head videos, a long to short video converter makes that shift practical.

Why old content often outperforms new clip-first content

When creators force themselves to make shorts from scratch, quality usually drops. The ideas get thinner, the hooks get louder, and the content starts feeling disposable.

Repurposed clips often work better because the substance is already there. The long-form version has context, real examples, and a complete thought behind the soundbite. Your job is to isolate the part that stands on its own.

Practical rule: Don’t ask, “Can I make a clip from this video?” Ask, “Which moments solve one problem fast enough to earn attention in a vertical feed?”

What a content engine actually looks like

A content engine is simple:

- One source asset: a webinar, interview, tutorial, or podcast episode.

- Several candidate moments: clips with one clear takeaway each.

- Quick editorial pass: tighten the open, clean captions, fix framing, and remove dead air.

- Platform-specific publishing: distribute the same insight in the format each channel prefers.

That’s the strategic use of a montage video maker. Not random clipping. Systematic extraction.

Preparing Your Video for AI Analysis

Bad inputs produce bland clips. That part hasn’t changed, even though the tools have.

The biggest jump in editing technology happened over a long arc, from the Moviola in 1924 to early digital systems like the CMX 600 in 1971, which cost around $250,000 USD, to current AI-driven editing tools that can turn raw footage into social clips quickly for platforms at massive scale, including TikTok with over 2 billion users according to Massive’s history of video editing. The workflow is easier now. The need for good source material isn’t.

Choose source videos with clip density

Not every long video is worth processing.

A good source video has frequent moments that make sense on their own. Interviews with sharp answers, webinars with clear teaching segments, podcasts with debate, and tutorials with concise demonstrations usually produce stronger shorts than rambling live streams.

Use this checklist before you upload:

- Clear speaking moments: The speaker finishes thoughts cleanly instead of wandering through them.

- Topic changes with obvious boundaries: Distinct sections give the AI cleaner opportunities to cut.

- Audience relevance: The content answers questions people already ask in comments, sales calls, or DMs.

- Strong opinions or memorable phrasing: Neutral language rarely turns into a high-performing short.

- Minimal noise: Crosstalk, echo, and background music make analysis harder.

Prioritize audio over visuals

Creators obsess over camera quality and ignore what drives most AI clip detection: words.

If the audio is muddy, transcript quality drops. If transcript quality drops, the AI has less confidence in the subject matter, hook lines, and clean clip boundaries. A sharp 4K talking head with echo can perform worse in repurposing than a simpler recording with clean speech.

Here’s the practical order of importance for source prep:

PriorityWhat to checkWhy it matters

1

Clean vocal audio

Helps transcription and hook detection

2

Clear topic structure

Makes clip segmentation easier

3

Speaker energy

Gives you more usable openings

4

Visual stability

Helps reframing and mobile crops

5

Resolution

Useful, but not the first bottleneck

Prep the file like an editor, not an uploader

A little organization saves time later.

- Trim obvious dead zones first: Remove countdowns, long intros, setup chatter, or empty ending screens.

- Use the right version: Upload the final cut, not a draft with duplicate takes.

- Keep titles or notes nearby: Topic summaries help you judge the AI’s suggestions faster once clips are generated.

Clean source footage doesn’t guarantee great shorts. It just removes excuses from the edit.

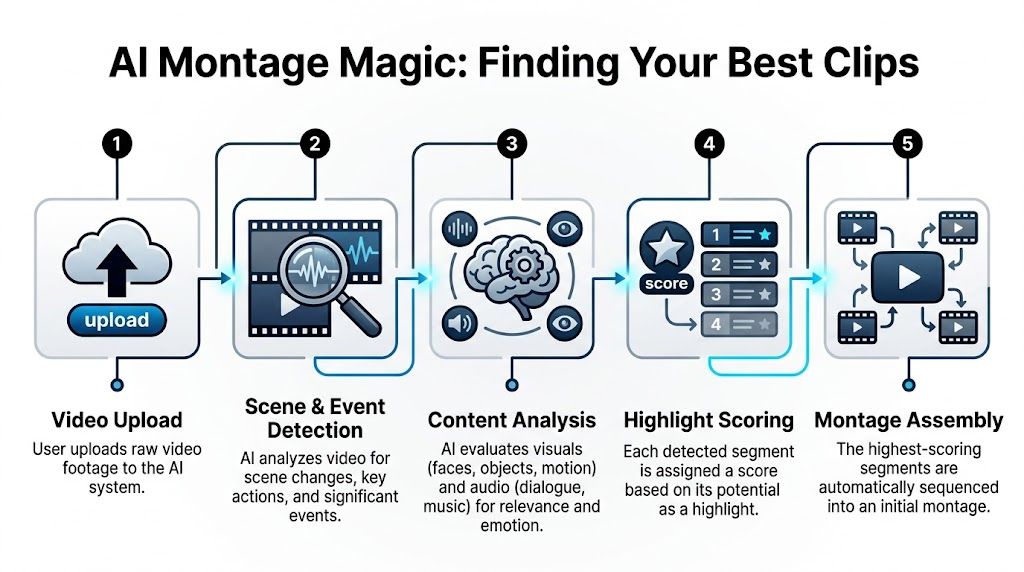

How AI Magically Finds Your Best Clips

Most creators treat AI clipping like a mystery. Upload video, wait, hope for magic.

What’s happening is more practical than magical. A montage video maker is scoring your footage from several angles at once, then surfacing the parts most likely to work as standalone clips.

It starts with transcript-level understanding

The AI first turns speech into text. That transcript becomes the backbone of clip detection.

Once the tool can read the full conversation, it can identify where a topic begins, where a key point lands, and where a speaker says something with enough standalone meaning to work outside the full video. This is why explanation-heavy content often repurposes well. The spoken content gives the system more to work with.

Then it looks for moments with stopping power

Not every useful sentence is a useful opening.

For short-form, the beginning matters disproportionately. AI tools try to detect hooks: moments where the speaker asks a sharp question, makes a bold claim, delivers a surprising contrast, or states a pain point in direct language. These are the lines most likely to earn the next second of attention.

Data cited by Visla’s montage guide says shorts under 15 seconds achieve 2.5x higher completion rates on YouTube Shorts, TikTok favors 7 to 15 second clips with 40% higher shares, and 68% of creators report struggling with short-form optimization. That’s why AI systems trim aggressively. They’re not trying to preserve the full argument. They’re trying to preserve the highest-yield moment.

Visual and audio signals shape the ranking

Transcript analysis is only part of it.

The better tools also inspect visual changes, speaker framing, pauses, pacing, and moments where energy rises. If a person leans in, changes tone, answers a question directly, or lands a statement cleanly, that segment often scores higher than a section with the same topic but weaker delivery.

That scoring logic is useful because most human editors already do this intuitively. AI does the first pass faster.

Why the first batch of clips is rarely final

The initial output is a draft selection, not a publishing decision.

Use it like a ranked shortlist. Review the strongest candidates first. Don’t ask whether the AI found “the perfect clips.” Ask whether it found enough strong starts to make the review process efficient. In practice, that’s the core value.

If you want a tool purpose-built for this kind of repurposing workflow, an AI shorts maker should help with clip extraction, framing, and social formatting in one pass.

The fastest workflow is not “accept every AI suggestion.” It’s “let the AI narrow the search space, then apply taste.”

Music matters more than most creators think

A clip can have a strong spoken hook and still feel flat if the audio bed clashes with the pacing.

That’s why it helps to understand AI music remixer workflows when you’re refining shorts. Even if your montage tool already offers music options, learning how remixing changes pacing and emotional contour will make you better at choosing what belongs under a clip and what distracts from it.

Refining and Perfecting Your AI-Generated Shorts

Average repurposing turns into usable output.

AI gets rid of the worst part of the process, which is scrubbing through long footage looking for candidates. It doesn’t remove the need for editorial judgment. Your job now is to make small changes that materially improve watchability.

Fix the first second first

Most weak shorts fail before the insight even arrives.

When reviewing AI-generated candidates, start by inspecting the opening frame and the first line of dialogue. If the clip begins with a throat clear, a filler phrase, or context that only makes sense inside the original episode, tighten it immediately.

Good first-second edits usually involve one of these moves:

- Cut the runway: Remove “so,” “and,” “I think,” or “as I said earlier.”

- Promote the payoff: Start on the strongest claim, then add context after if needed.

- Lead with the question: If the clip answers a common question, open on the question itself.

- Trim the exit hard: Social clips rarely need a slow landing.

Clean captions like a copy editor

Auto-captions save time, but raw captions rarely look polished.

Treat them as draft text. Correct obvious transcript errors, then improve readability. Short line breaks, consistent capitalization, and selective emphasis usually matter more than fancy animation. If your spoken line is dense, simplify the subtitle phrasing while preserving meaning.

A useful standard is this: if the viewer watches on mute, can they still follow the argument without strain?

Editing note: Captions shouldn’t mirror every spoken flaw. They should carry the message cleanly.

Reframing is technical, but the decision is editorial

Vertical conversion is where many AI-generated clips look competent but forgettable.

Automatic reframing usually keeps a face centered, and that’s a good starting point. But “subject in frame” isn’t the same as “best composition.” If there are two speakers, a product demo, slides, or hand gestures, review the crop manually. The right crop should support the point, not just chase movement.

What to check in reframed clips

ElementGood resultWeak result

Speaker crop

Eyes and expression remain readable

Forehead cut off or too much empty space

Multi-person shot

Active speaker stays prioritized

Frame jumps unpredictably

Demo footage

UI or object remains visible

Important visual proof gets cropped out

Caption placement

Text doesn’t cover mouths or key visuals

Subtitles block the reason to watch

Use montage grammar, not just trimming

A short clip still benefits from editorial rhythm.

One professional guideline worth keeping in mind is the 3:2:1 rule: 3 wide shots, 2 medium shots, 1 close-up. According to The Montage Maven’s breakdown, applying this ratio can increase viewer retention by up to 40% compared with uniform shot lengths. Even if you’re editing social clips rather than cinematic montages, the principle still holds. Variety keeps attention.

In practical terms, that means if your source footage gives you options, don’t leave every insert at the same visual distance. Mix context, action, and detail. If your clip is mostly talking-head footage, simulate variety with punch-ins, B-roll inserts, screen captures, or cropped close frames during the emotional or high-importance line.

Add a repeatable brand layer

You want consistency, but not template fatigue.

Build a light brand system for shorts:

- One caption style: same font family, same emphasis logic

- One color hierarchy: a primary color for highlights, a neutral base for body text

- One intro treatment: subtle, not a loud branded bumper

- One end treatment: optional CTA or account identifier

That approach keeps clips recognizable without making every post feel machine-produced.

A quick visual walkthrough helps when you’re calibrating these choices:

Know when to override the AI

Sometimes the AI picks the line that sounds exciting rather than the line that converts.

A flashy opinion may get attention. A concise practical takeaway may drive saves, shares, or profile visits. If you’re repurposing for business goals, choose clips that align with audience intent, not just novelty.

That usually means favoring clips that do one of these well:

- Answer a narrow problem quickly

- Reframe a common mistake

- Show a concrete before-and-after

- State a strong opinion with context

- Deliver a memorable line that still teaches something

The best montage video maker can accelerate selection. It can’t decide your positioning for you.

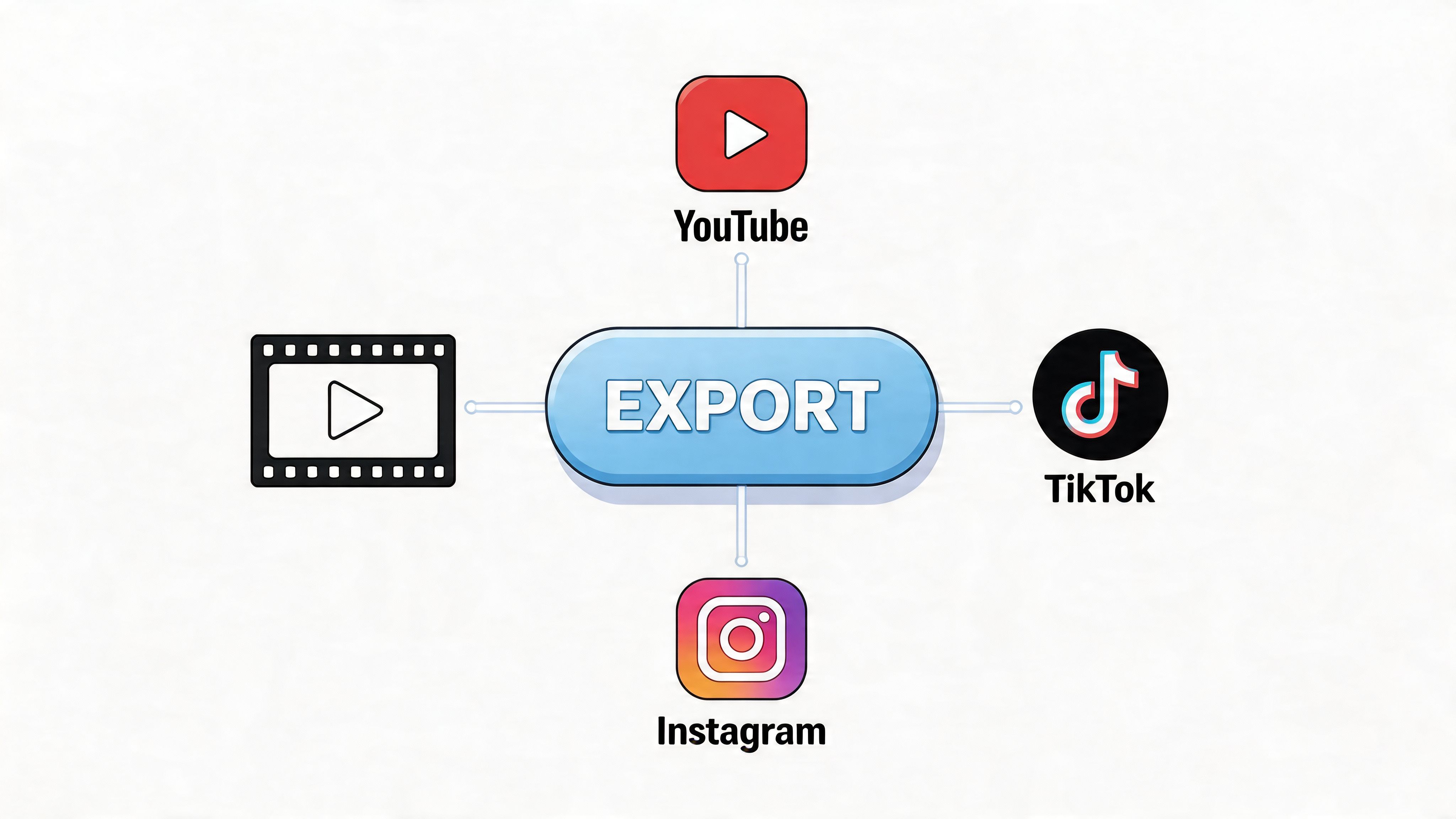

Exporting and Optimizing for Each Platform

A strong short can still underperform if the export is sloppy or the posting workflow is lazy.

At this stage, many teams waste the value they created upstream. They finish the edit, download one file, cross-post it everywhere, and hope the algorithm sorts it out. That approach misses one of the biggest advantages of repurposing: you can adapt the same idea for different viewing contexts with minimal extra work.

Start with clean export settings

For social-first clips, consistency matters more than experimentation.

Modern montage apps commonly support Full HD 1080p exports for posting to TikTok, Reels, and Shorts, which keeps quality high enough for mobile viewing without overcomplicating delivery, as described in the Montage Video Maker & Editor App Store listing. Stick with platform-friendly vertical formatting, confirm captions remain legible after export, and watch the final file once before publishing. Rendering mistakes are more common than people admit.

Match the clip to the platform’s behavior

A clip doesn’t fail only because of editing. It can fail because the promise and the platform don’t match.

Think in terms of user intent:

- TikTok: strongest for punchy hooks, sharper opinions, and quick pattern breaks

- Instagram Reels: often benefits from polished presentation and visually clean edits

- YouTube Shorts: usually rewards clarity and immediate value, especially when the title and opening line align

That doesn’t mean making three entirely different videos. It means adjusting the framing around the same core insight.

Publish with tracking in mind

A lot of marketers still repurpose video without learning what each clip did.

That’s a mistake, especially because the upside is real. AI montage tools can boost repurposed content engagement by 3x, yet only 22% of marketers effectively track clip-specific ROI, according to the source summarized in Adobe Express’s montage page. If you’re posting shorts to support a business goal, attach campaign logic to the workflow.

A simple tracking stack for repurposed shorts

- Unique post descriptions: Slightly vary your post copy by platform so you can identify which framing worked.

- UTM-linked profile or landing page URLs: That gives you a cleaner path from clip view to site action.

- Clip-level naming conventions: Name exports by source video, topic, and hook style.

- Subtitle consistency: If you need clean on-screen text fast, a subtitle generator can help standardize readability across platforms.

A short video is content. A tracked short video is feedback.

Don’t confuse cross-posting with distribution

Cross-posting is mechanical. Distribution is strategic.

If a clip opens with a data point, the caption can frame it one way for marketers and another way for creators. If a clip is educational, the thumbnail text can emphasize the problem on one platform and the result on another. Small shifts like that often matter more than editing another transition.

Use this final checklist before publishing:

CheckWhy it matters

Does the hook appear immediately?

Slow starts lose attention fast

Are captions readable on mobile?

Many people watch muted

Is the crop correct in vertical preview?

Export previews can hide framing issues

Does the post text match the clip promise?

Misalignment hurts retention

Can you measure the outcome?

Otherwise you can’t improve the next batch

Common Questions About AI Montage Makers

Creators usually hit the same friction points after their first few rounds of repurposing. The tool works, but the edge cases create uncertainty.

Should I use platform audio or AI-generated music

Use platform-native audio when the audio trend itself is part of the distribution strategy. Use royalty-free or AI-generated music when clarity, brand safety, and reuse matter more.

Modern montage apps can include an AI theme composer that generates original, royalty-free background music, which helps avoid copyright issues and speeds up production, according to the App Store listing for Montage Video Maker & Editor. That matters if you want reusable assets across multiple channels or client accounts. The same source also notes that repurposing can achieve 5 to 10x higher reach for YouTubers compared with the original long-form content, so avoiding takedowns and muted posts is not a minor detail.

If you want a broader look at music options around generation, editing, and workflow decisions, this guide to Top AI Tools for Music Production is useful background.

How does the AI decide what counts as a good clip

It usually combines transcript meaning, speaking energy, clean topic boundaries, and visual cues.

The important point is that the AI is not judging your brand strategy. It’s judging clip potential based on signals it can measure. That’s why it may choose a dramatic statement over a quieter but more qualified explanation. Sometimes that’s right. Sometimes it isn’t.

What if the AI misses the moment I wanted

That happens. A lot.

When it does, don’t start over. Use the AI suggestions as reference points, then manually build the clip from the original source using the same principles:

- Find the sentence with standalone value

- Start later than feels comfortable

- End earlier than feels polite

- Add captions that prioritize clarity

- Check the vertical crop before export

Are AI montage makers good enough for professional output

Yes, if you treat them as accelerators.

No, if you expect them to replace judgment. The best results come from combining machine speed with human taste. Let the tool do detection, rough cutting, caption drafting, and resizing. Keep the final call on hooks, pacing, visual emphasis, and platform fit.

The gap between average and excellent short-form content is rarely the software. It’s whether someone made deliberate editorial choices after the software finished its pass.

If you want to turn webinars, podcasts, interviews, and YouTube videos into social-ready clips without rebuilding your editing process, Klap is built for exactly that workflow. Upload or paste a link, let the AI find strong moments, review the suggested shorts, refine captions and framing, then export for TikTok, Reels, and Shorts.